Wednesday 26 March 2014

Introducing rr

Bugs that reproduce intermittently are hard to debug with traditional techniques because single stepping, setting breakpoints, inspecting program state, etc, is all a waste of time if the program execution you're debugging ends up not even exhibiting the bug. Even when you can reproduce a bug consistently, important information such as the addresses of suspect objects is unpredictable from run to run. Given that software developers like me spend the majority of their time finding and fixing bugs (either in new code or existing code), nondeterminism has a major impact on my productivity.

Many, many people have noticed that if we had a way to reliably record program execution and replay it later, with the ability to debug the replay, we could largely tame the nondeterminism problem. This would also allow us to deliberately introduce nondeterminism so tests can explore more of the possible execution space, without impacting debuggability. Many record and replay systems have been built in pursuit of this vision. (I built one myself.) For various reasons these systems have not seen wide adoption. So, a few years ago we at Mozilla started a project to create a new record-and-replay tool that would overcome the obstacles blocking adoption. We call this tool rr.

Design

Here are some of our key design parameters:

- We focused on debugging Firefox. Firefox is a complex application, so if rr is useful for debugging Firefox, it is very likely to be generally useful. But, for example, we have not concerned ourselves with record and replay of hostile binaries, or highly parallel applications. Nor have we explored novel techniques just for the sake of novelty.

- We prioritized deployability. For example, we deliberately avoided modifying the OS kernel and even worked hard to eliminate the need for system configuration changes. Of course we ensured rr works on stock hardware.

- We placed high importance on low run-time overhead. We want rr to be the tool of first resort for debugging. That means you need to start getting results with rr about as quickly as you would if you were using a traditional debugger. (This is where my previous project Chronomancer fell down.)

- We tried to take a path that would lead to a quick positive payoff with a small development investment. A large speculative project in this area would fall outside Mozilla's resources and mission.

- Naturally, the tool has to be free software.

I believe we've achieved those goals with our 1.0 release.

There's a lot to say about how rr works, and I'll probably write some followup blog posts about that. In this post I focus on what rr can do.

rr records and replays a Linux process tree, i.e. an initial process and the threads and subprocesses (indirectly) spawned by that process. The replay is exact in the sense that the contents of memory and registers during replay are identical to their values during recording. rr provides a gdbserver interface allowing gdb to debug the state of replayed processes.

Performance

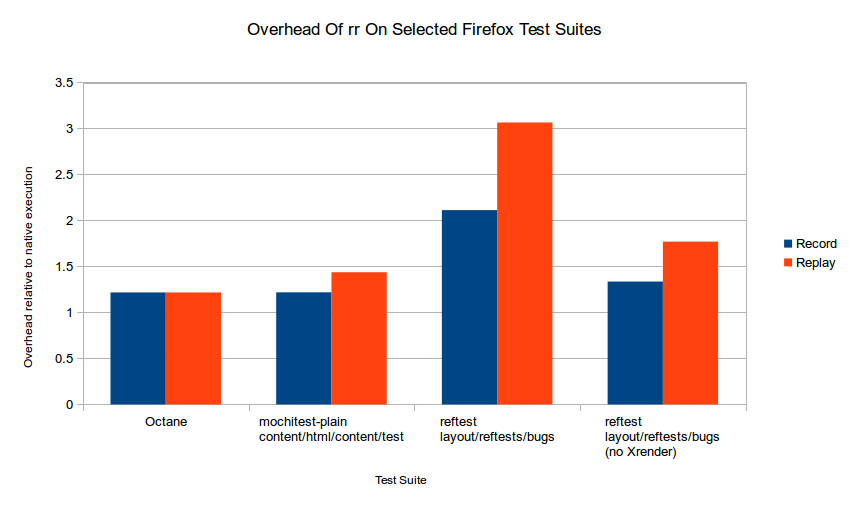

Here are performance results for some Firefox testsuite workloads:

These represent the ratio of wall-clock run-time of rr recording and replay over the wall-clock run-time of normal execution (except for Octane, where it's the ratio of the normal execution's Octane score over the record/replay Octane scores ... which, of course, are the same). It turns out that reftests are slow under rr because Gecko's current default Linux configuration draws with X11, hence the rendered pixmap of every test and reference page has to be slurped back from the X server over the X socket for comparison, and rr has to record all of that data. So I also show overhead for reftests with Xrender disabled, which causes Gecko to draw locally and avoid the problem.

These represent the ratio of wall-clock run-time of rr recording and replay over the wall-clock run-time of normal execution (except for Octane, where it's the ratio of the normal execution's Octane score over the record/replay Octane scores ... which, of course, are the same). It turns out that reftests are slow under rr because Gecko's current default Linux configuration draws with X11, hence the rendered pixmap of every test and reference page has to be slurped back from the X server over the X socket for comparison, and rr has to record all of that data. So I also show overhead for reftests with Xrender disabled, which causes Gecko to draw locally and avoid the problem.

I should also point out that we stopped focusing on rr performance a while ago because we felt it was already good enough, not because we couldn't make it any faster. It can probably be improved further without much work.

Debugging with rr

The rr project landing page has a screencast illustrating the rr debugging experience. rr lets you use gdb to debug during replay. It's difficult to communicate the feeling of debugging with rr, but if you've ever done something wrong during debugging (e.g. stepped too far) and had that "crap! Now I have to start all over again" sinking feeling --- rr does away with that. Everything you already learned about the execution --- e.g. the addresses of the objects that matter --- remains valid when you start the next replay. Your understanding of the execution increases monotonically.

Limitations

rr has limitations. Some are inherent to its design, and some are fixable with more work.

- rr emulates a single-core machine. So, parallel programs incur the slowdown of running on a single core. This is an inherent feature of the design. Practical low-overhead recording in a multicore setting requires hardware support; we hope that if rr becomes popular, it will motivate hardware vendors to add such support.

- rr cannot record processes that share memory with processes outside the recording tree. This is an inherent feature of the design. rr automatically disables features such as X11 shared memory for recorded processes to avoid this problem.

- For the same reason, rr tracees cannot use direct-rendering user-space GL drivers. To debug GL code under rr we'll need to find or create a proxy driver that doesn't share memory with the kernel (something like GLX).

- rr requires a reasonably modern x86 CPU. It depends on certain performance counter features that are not available in older CPUs, or in ARM at all currently. rr works on Intel Ivy Bridge and Sandy Bridge microarchitectures. It doesn't currently work on Haswell and we're investigating how to fix that.

- rr currently only supports x86 32-bit processes. (Porting to x86-64 should be straightforward but it's quite a lot of work.)

- rr needs to explicitly support every system call executed by the recorded processes. It already supports a wide range of syscalls --- syscalls used by Firefox --- but running rr on more workloads will likely uncover more syscalls that need to be supported.

- When used with gdb, rr does not provide the ability to call program functions from the debugger, nor does it provide hardware data breakpoints. These issues can be fixed with future work.

Conclusions

We believe rr is already a useful tool. I like to use it myself to debug Gecko bugs; in fact, it's the first "research" tool I've ever built that I like to use myself. If you debug Firefox at the C/C++ level on Linux you should probably try it out. We would love to have feedback --- or better still, contributions!

If you try to debug other large applications with rr, you will probably encounter rr bugs. Therefore we are not yet recommending rr for general-purpose C/C++ debugging. However, if rr interests you, please consider trying it out and reporting/fixing any bugs that you find.

We hope rr is a useful tool in itself, but we also see it as just a first step. rr+gdb is not the ultimate debugging experience (even if gdb's backtracing features get an rr-based backend, which I hope happens!). We have a lot of ideas for making vast improvements to the debugging experience by building on rr. I hope other people find rr useful as a building block for their ideas too.

I'd like to thank the people who've contributed to rr so far: Albert Noll, Nimrod Partush, Thomas Anderegg and Chris Jones --- and to Mozilla Research, especially Andreas Gal, for supporting this project.

Comments