Thursday 29 March 2018

Speeding Up `dwarfdump` With Rust

Writing a debugger for C++ on Linux, you spend a lot of time examining pretty-printed DWARF debug information using tools like readelf, objdump or dwarfdump. Unfortunately this can be quite slow. For Firefox's libxul.so, dwarfdump's pretty-printed output of just the main .debug_info is 25GiB. The standard objdump and readelf tools take about three minutes to print it to /dev/null.

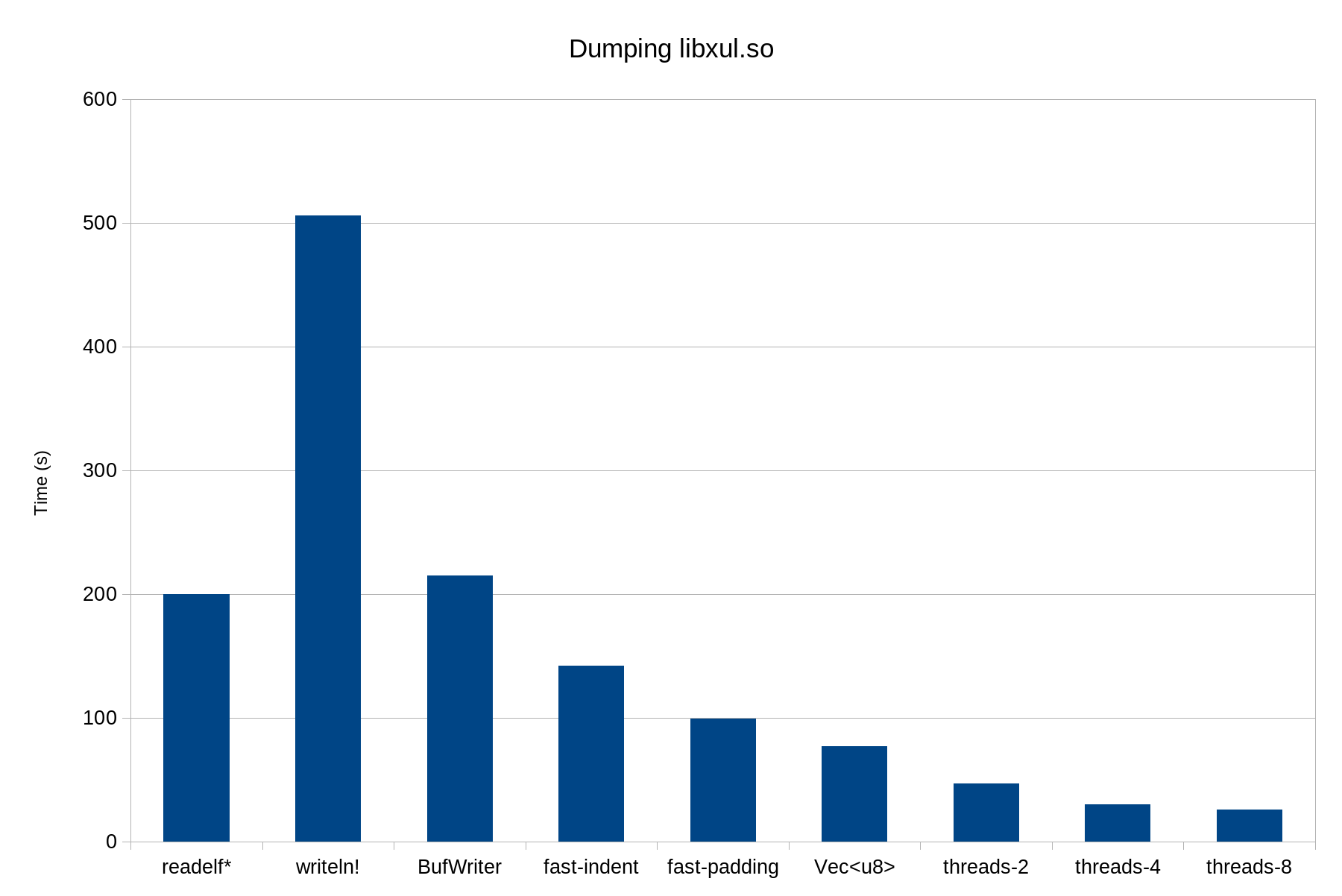

The Rust gimli crate includes a Rust-implemented version of dwarfdump. Initially it took about eight minutes to dump libxul, although the comparison is unfair because dwarfdump dumps more data than readelf. I decided to try to speed dwarfdump up. TL;DR: I reduced the dump time from 506s to 26s by fixing some simple issues and taking advantage of Rust "fearless parallelism". I think there are interesting opportunities for speeding up many kinds of command-line tools using Rust and parallelism.

Initially gimli dwarfdump was using println! to print every line of output. Every println! call temporarily locks the Rust stdout stream and performs a write system call, even when redirected to a file. This is extremely inefficient; it's much more efficient to buffer output so multiple lines are written with each system call. Since dwarfdump only used one thread, it can take the lock at the start and hold it permanently. These changes reduced the run time to 215s.

I did some profiling to look for hotspots amenable to micro-optimizations. I found that we spent a lot of CPU time in Rust's formatted-string padding code. dwarfdump used right-padding formatting of an empty string ("{:indent$}") to insert a specified number of spaces to indent each line. This is quite slow because the padding implementation writes the padded value to a temporary buffer, measures the length of the resulting string, and then writes the buffered value and the padding bytes. I changed dwarfdump to indent by printing slices from a large string of spaces, reducing the run time to 142s. I also found that where some non-empty string tokens were being right-padded, we could speed things up emitting the padding as a string slice, taking advantage of the fact that these are static strings whose lengths we know. That reduced the run time to 99s.

There are probably opportunities to improve the performance of Rust formatted text output by combining static knowledge of the format string and the types and other details of the parameters.

I then turned to parallelism. dwarfdump prints the contents of .debug_info by looping over each compilation unit, and this is easy to do in parallel — in Rust, at least. In Rust, a method that takes an immutable &self reference guarantees it can be called safely on the same object from multiple threads, so it's evident from a library's API types whether and how it can be used with multiple threads, and the compiler checks your usage. Better still, since immutability is the default, in practice Rust libraries tend to work well with multithreading, and gimli is no exception.

One important detail is that we want to output the results for compilation units in the correct order: the output for a compilation unit has to be buffered and printed to stdout after the output for all previous compilation units. You don't want to buffer the output of too many compilation units at once; each of those buffers can be tens of megabytes. I created a parallel-output utility function to handle that. It assigns compilation units to a fixed number of worker threads (N) in a strict round-robin order and ensures that a worker thread doesn't start working on a new compilation unit until the results for its previous compilation unit have been printed. Thus at most N output buffers are required. With eight worker threads (for my quad-core, eight-hardware-threads Skylake laptop) this reduced the run time to 26s.

Even with a single worker thread, run time dropped to 77s; some of the improvement actually came from changing writes from a BufWriter to a Vec<u8>. That may be due to io::Result error propagation and checking. Two threads give 47s, and four threads give 30s. Exceeding the physical cores provides negligible returns.

At peak performance gimli dwarfdump produces 1GB of output per second. It's interesting that even for relatively simple pretty-printing and such high data volume, serialized large writes to stdout are not the bottleneck. This suggests that even simple Unixy stdio-pipeline tools might benefit from internal parallelism.

Achieving this performance by improving existing C tools would have been a lot more difficult than with gimli and Rust. There's no way to be sure that the relevant DWARF processing code, e.g. binutils/dwarf.c, is safe for use on multiple threads. (Given the presence of many unadorned static variables, it probably isn't.) Efficiently switching output backends would have been more difficult than in Rust, where it's idiomatic to parameterize output code on the static type of the output backend, e.g. fn dump_info<W: Writer>(w: &mut W, ...).